Brief overviews of our research

Quantum computing theory

Quantum algorithms for universal quantum computers

As quantum hardware progresses from the noisy intermediate-scale (NISQ) era toward early fault tolerance, a critical challenge remains: designing algorithms that are both resource-efficient and capable of solving complex, general problems. Our research focuses on bridging this gap by developing quantum algorithms tailored for general fault-tolerant architectures, with a specific emphasis on open quantum systems, non-Hermitian dynamics, and practical quantum chemistry. A primary thrust of our work is extending quantum simulation beyond standard closed systems to describe realistic interactions with environments. To this end, we have introduced a “Linear Combination of Superoperators” framework for simulating Lindbladian dynamics, achieving logarithmic precision scaling while requiring only two ancilla qubits [1]. Complementing this, we developed a divide-and-conquer algorithm to solve general non-Hermitian eigenvalue problems—essential for PT-symmetric physics and Markov processes—demonstrating a provable exponential speedup over classical methods [2]. To ensure these theoretical advances are experimentally viable, we also focus on efficient observable extraction and domain-specific applications. We devised an ancilla-free method for computing n-time correlation functions, allowing for the retrieval of complex dynamical information on hardware with limited connectivity [3]. Finally, we are actively mapping the trajectory for quantum molecular systems, surveying the algorithmic landscape for ground-state estimation and Hamiltonian simulation to identify concrete opportunities for quantum advantage in the early fault-tolerant regime [4].

- W Yu, X Li, Q Zhao, X Yuan, Exponentially reduced circuit depths in Lindbladian simulation, Phys. Rev. Lett. 135 (16),160602, 2025;

- XM Zhang, Y Zhang, W He, X. Yuan, Exponential Quantum Advantages for Practical Non-Hermitian Eigenproblems, Phys. Rev. Lett. 135, 140601, 2025;

- X Wang, L Xiong, X Cai, X Yuan, Computing -time correlation functions without ancilla qubits, Phys. Rev. Lett. 135,230602 (23), 2025;

- Y Zhang, X Zhang, J Sun, H Lin, Y Huang, D Lv, Xiao Yuan, Fault-tolerant quantum algorithms for quantum molecular systems: A survey, Wiley Interdisciplinary Reviews: Computational Molecular Science 15 (3), e70020, 2025;

Quantum algorithms for near-term quantum computers

Standard quantum algorithms for solving many-body problems typically demand deep quantum circuits, necessitating fault-tolerant hardware to correct errors. However, current quantum devices are in the Noisy Intermediate-Scale Quantum (NISQ) era, characterized by limited qubit counts and short coherence times. To bridge this gap, our research focuses on designing advanced hybrid quantum-classical algorithms. By strategically partitioning computational complexity between classical and quantum processors, we aim to solve practical, complex quantum many-body problems using currently available hardware.

Variational Quantum Algorithms and Dynamic Simulation

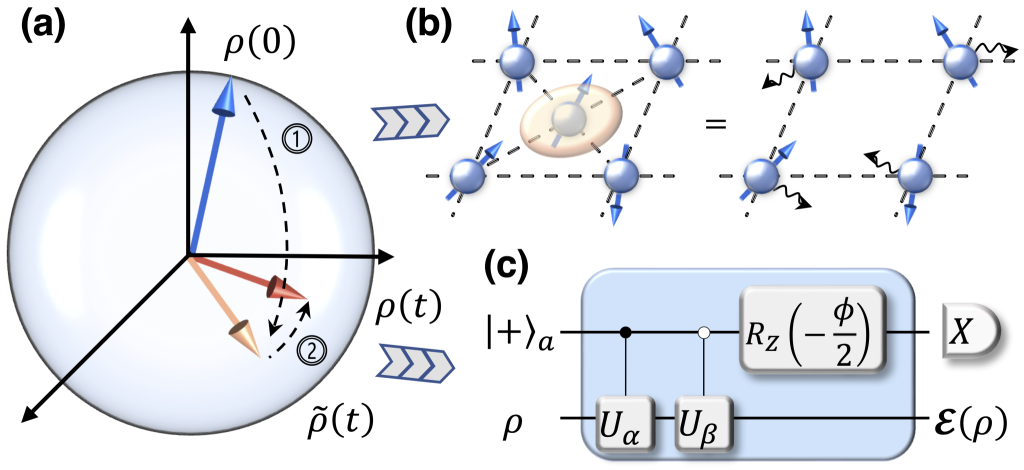

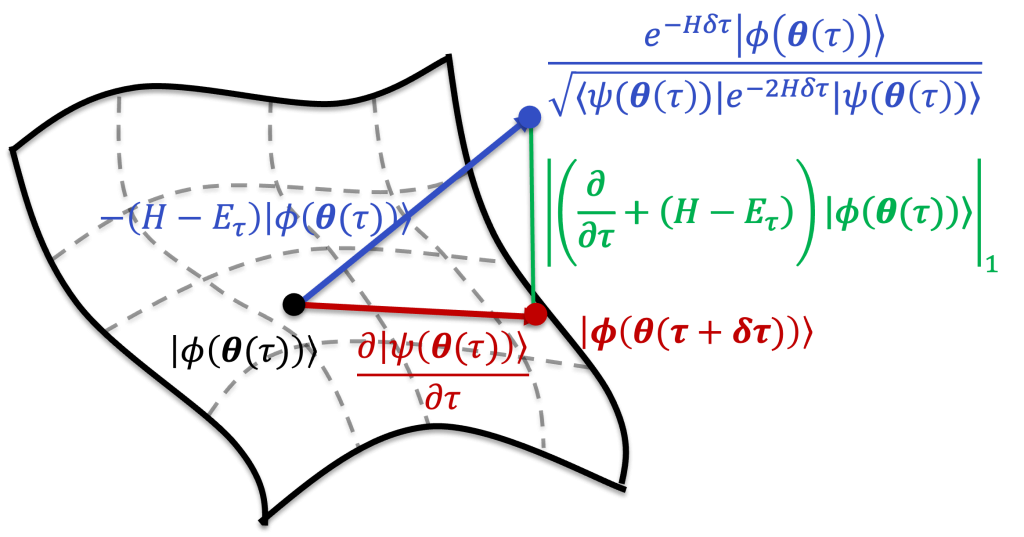

Recognizing the shallow-depth constraints of near-term hardware, we have systematically advanced the theory and application of Variational Quantum Algorithms (VQAs). Traditional VQAs often struggle with optimization landscapes; to address this, we introduced a variational quantum imaginary-time evolution algorithm [1]. This method utilizes a geometric approach to bypass the local minima issues common in gradient-descent optimizers, demonstrating significant numerical advantages in solving electronic structure problems. Furthermore, we established a unified theoretical framework for variational quantum simulation [2], which generalized the principles of real- and imaginary-time evolution. We further extended this framework to simulate open quantum system dynamics governed by the Lindblad equation [3] and developed adaptive strategies for quantum dynamics that significantly reduce circuit depth compared to standard Trotterization [4]. Due to our systematic contributions to this field, we were invited to write a comprehensive review on the state of variational quantum algorithms [5, 6].

Overcoming Qubit and Depth Limitations via Hybrid Architectures

While VQAs optimize parameter training, they are still bounded by the physical resources of the chip. We have proposed general hybrid frameworks to transcend the limitations of qubit count and circuit depth.

Addressing Qubit Constraints: The scale of a quantum simulation is typically limited by the number of available qubits (e.g., simulating 100 spin-orbitals traditionally requires 100+ qubits). However, physical systems often exhibit non-uniform correlation structures. By leveraging this, we developed methods that partition the problem, reserving the quantum processor for the most strongly correlated subsystems while handling the rest classically. We introduced a hybrid architecture combining quantum circuits with tensor networks [7] and a framework integrating quantum simulation with perturbation theory [8]. These approaches allow small-scale quantum devices to accurately simulate the static and dynamic properties of large-scale systems, such as the Bose-Hubbard model and complex spin lattices.

Addressing Circuit Depth and Noise: Deep quantum circuits are necessary to represent complex quantum correlations but are susceptible to noise accumulation. To mitigate this, we developed techniques to “virtualize” circuit depth, effectively trading classical post-processing for reduced quantum gate depth. We proposed a hybrid Quantum Monte Carlo algorithm that uses quantum computers to guide statistical sampling [9] and a hybrid Schrödinger-Heisenberg picture for circuit evolution [10]. These methods enable high-precision chemical and material calculations using significantly shallower circuits, providing a practical pathway to quantum advantage on noisy hardware.

- S. McArdle, S. Endo, T. Jones, Y. Li, S. Benjamin, X. Yuan, Variational quantum simulation of imaginary time evolution with applications in chemistry and beyond, npj Quantum Information 5 (1), 1-6, 2019 ;

- X. Yuan, S. Endo, Q. Zhao, S. Benjamin, Y. Li, Theory of variational quantum simulation, Quantum 3, 191, 2019;

- S. Endo, Y. Li, S. Benjamin, X. Yuan, Variational quantum simulation of general processes, Phys. Rev. Lett. 125, 010501, 2020;

- Z. Zhang, J. Sun, X. Yuan, M. Yung, Low-Depth Hamiltonian Simulation by an Adaptive Product Formula, Phys. Rev. Lett. 130, 040601, 2023;

- M. Cerezo, A. Arrasmith, R. Babbush, S. C. Benjamin, S. Endo, K. Fujii, J. R. McClean, K. Mitarai, X. Yuan, L. Cincio, P. J. Coles, Variational Quantum Algorithms, Nature Reviews Physics 1-20, 2021;

- S. Endo, Z. Cai, S. C. Benjamin, X. Yuan, Hybrid quantum-classical algorithms and quantum error mitigation, J. Phys. Soc. Jpn 90 (3), 032001, 2021;

- X. Yuan, J. Sun, J. Liu, Q. Zhao, Y. Zhou, Quantum simulation with hybrid tensor networks, Phys. Rev. Lett. 127, 040501, 2021;

- J. Sun, S. Endo, H. Lin, P. Hayden, V. Vedral, X. Yuan, Perturbative quantum simulation, Phys. Rev. Lett. 129 (12), 120505, 2022;

- Y. Zhang, Y. Huang, J. Sun, D. Lv, X. Yuan, Quantum Computing Quantum Monte Carlo, Physical Review A 112 (2),022428, 2025;

- Z. Shang, M. Chen, X. Yuan, C. Lu, J. Pan, Schrödinger-Heisenberg Variational Quantum Algorithms, Phys. Rev. Lett. 131 (6), 060406, 2023;

Quantum toolkit: error mitigation, state preparation, and measurement

Realizing the potential of quantum computing requires more than just hardware; it demands a comprehensive algorithmic toolkit to bridge the gap between abstract theoretical models and the constraints of the Noisy Intermediate-Scale Quantum (NISQ) era. Our research focuses on developing this essential infrastructure by addressing three critical bottlenecks: suppressing hardware errors, efficient data loading, and cost-effective information extraction. We aim to construct a robust software layer that maximizes the utility of near-term devices through error mitigation, optimized state preparation, and advanced measurement schemes.

Quantum Error Mitigation

Since fully fault-tolerant quantum error correction remains resource-prohibitive for near-term devices, software-based mitigation is essential for achieving accuracy. In [1], we proposed a stabilizer-like mitigation method tailored for NISQ hardware, capable of detecting 60%–80% of depolarizing errors. This approach is particularly effective against correlated noise when combined with extrapolation techniques. Complementing this, we developed a stochastic error mitigation scheme in [2] designed to suppress realistic, non-local noise sources such as crosstalk. By relying on accurate single-qubit controls, this method improves simulation accuracy by up to two orders of magnitude. Furthermore, in [3, 4], we introduced the paradigm of “virtual resource distillation.” This approach bypasses the fundamental limitations and no-go theorems of physical distillation by utilizing classical post-processing to approximate the statistics of high-quality pure resource states from noisy outputs, effectively serving as a powerful mitigation strategy for state preparation and storage.

Quantum State Preparation

The efficiency of inputting classical data into quantum systems is a defining factor for algorithms ranging from Hamiltonian simulation to machine learning. Our work focuses on optimizing the circuit complexity of this subroutine. In [5], we introduced a deterministic algorithm that achieves the optimal circuit depth of Θ(n) for arbitrary n-qubit states and Θ(log(nd)) for d-sparse states, the latter representing an exponential speedup over previous best-known results. Additionally, in [5], we presented a family of protocols that achieve linear scaling in Clifford+T gate counts, O(Nlog(1/ϵ)), while offering tunable ancillary qubit requirements to facilitate flexible space-time trade-offs.

Quantum Measurement

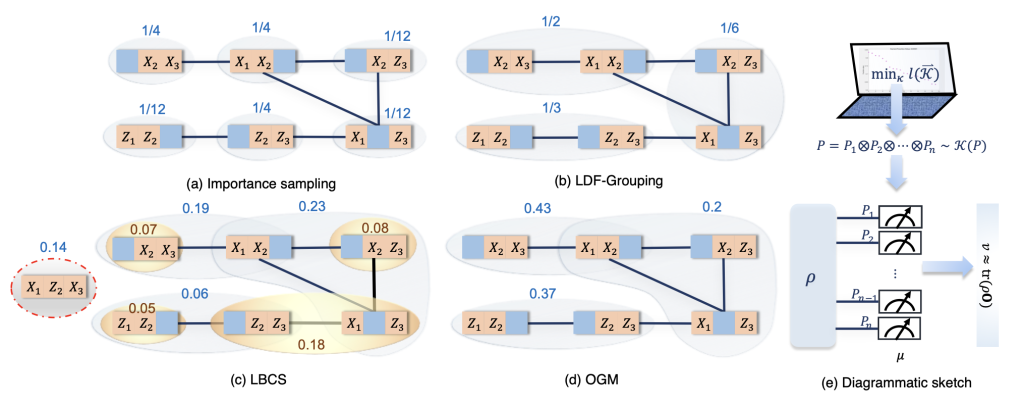

Extracting information from complex multi-qubit observables, particularly in quantum chemistry, often incurs prohibitive sampling costs that obscure quantum advantage. To resolve this, we introduced the Overlapped Grouping Measurement (OGM) framework in [7]. OGM unifies the strengths of importance sampling, observable compatibility, and classical shadows. Numerical tests demonstrate that this framework yields significantly higher accuracy and faster convergence than existing state-of-the-art methods, such as LDF-grouping and derandomized classical shadows, thereby reducing the measurement overhead required for practical applications.

- S. McArdle, X. Yuan, S. Benjamin, Error mitigated digital quantum simulation, Phys. Rev. Lett. 122, 180501, 2019;

- J. Sun, X. Yuan, T. Tsunoda, V. Vedral, S. C. Benjamin, S. Endo, Practical quantum error mitigation for analog quantum simulation, Phys. Rev. Applied, 15, 034026, 2021;

- X. Yuan, B. Regula, R. Takagi, M. Gu, Virtual quantum resource distillation, Phys. Rev. Lett. 132 (5), 050203, 2024;

- R. Takagi, X. Yuan, B. Regula, M. Gu, Virtual quantum resource distillation, Phys. Rev. A 109 (2), 022403, 2024;

- XM. Zhang, T. Li, X. Yuan, Quantum State Preparation with Optimal Circuit Depth: Implementations and Applications, Phys. Rev. Lett. 129(23), 230504, 2022;

- XM. Zhang, X. Yuan, On circuit complexity of quantum access models for encoding classical data, npj Quantum Information 10 (1), 42, 2024;

- B. Wu, J. Sun, Q. Huang, X. Yuan, Overlapped grouping measurement: A unified framework for measuring quantum states, Quantum 7, 896, 2023;

Quantum computing applications

Quantum chemistry

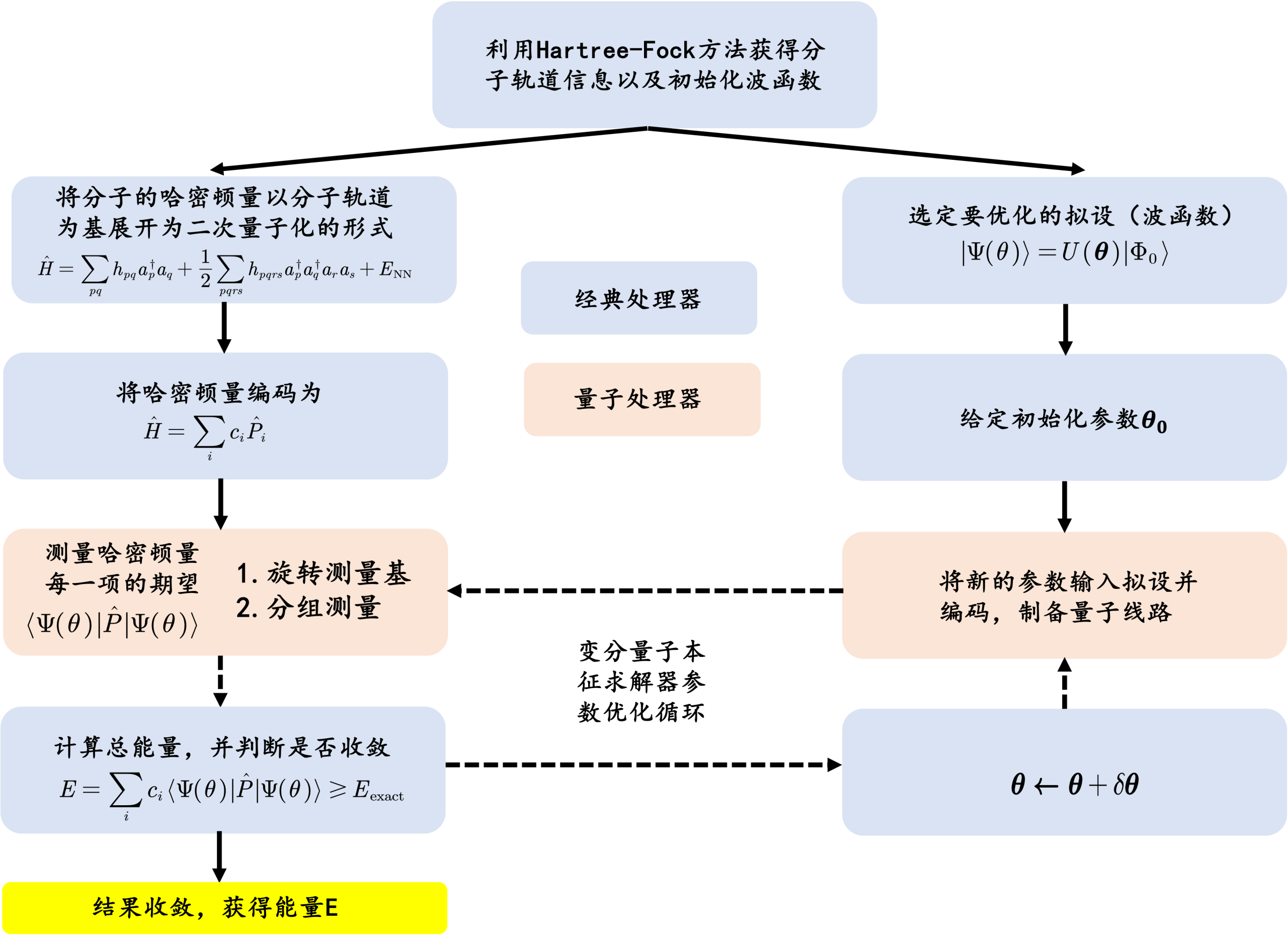

While fundamental quantum algorithms for solving ground states and dynamics of many-body systems were proposed decades ago, early research often focused on abstract models, overlooking the specific structures of real-world problems. With the rapid maturation of quantum technology, a critical frontier is bridging the gap between abstract quantum theory and practical applications in chemistry and materials science. Our work in chemistry focuses on two pillars: molecular vibrational spectroscopy and electronic structure theory.

Molecular Vibrational Spectra: Understanding molecular vibrations is essential for decoding light-matter interactions, which underpin mechanisms in solar cells, bio-imaging, and DNA radiation damage. Classical methods often struggle with anharmonic potentials due to exponential complexity. To address this, we developed a general quantum algorithm employing bosonic encoding and a problem-inspired ansatz. This approach allows for the efficient calculation of vibrational spectra under general potentials on near-term devices, overcoming the limitations of classical harmonic approximations [1].

Electronic Structure Theory: As the core of computational chemistry, electronic structure calculation is vital for understanding reaction mechanisms. However, classical approximation methods frequently fail in strongly correlated systems. Leveraging the natural advantage of quantum computing in this domain, I have developed numerous algorithms validated by theory and numerical simulations. I was invited to co-author a comprehensive review in Reviews of Modern Physics [2], which established the theoretical framework for quantum computational chemistry. Furthermore, based on my contributions to the field, I was invited to write a sole-author Perspective in Science [3]. This piece analyzed the significance of Google’s Hartree-Fock experiment and provided a roadmap for achieving practical quantum advantage in chemistry.

Scalable Hybrid Methods & Drug Discovery: To tackle large-scale systems with limited qubits, we have pioneered hybrid quantum-classical approaches. Notably, by integrating Density Matrix Embedding Theory (DMET) with variational solvers, we successfully simulated a C18 molecule (144 spin-orbitals) using only 16 qubits [4], demonstrating a viable path for scaling up quantum chemistry. Furthermore, we introduce a hybrid quantum-classical algorithm based on periodic density matrix embedding theory that partitions unit cells into orbital fragments [5], enabling accurate ab initio simulations of strongly correlated solid-state materials with drastically reduced quantum resource requirements suitable for near-term devices. Recently, we also extended these techniques to pharmaceutical applications, combining the Quantum Approximate Optimization Algorithm (QAOA) with digitized counter-diabatic driving to solve molecular docking problems [6], thereby pushing quantum computing toward practical drug discovery.

- S. McArdle, A. Mayorov, X. Shan, S. Benjamin, X. Yuan, Quantum computation of molecular vibrations, Chem. Sci., 2019,10, 5725-5735, 2019;

- S. McArdle, S. Endo, A. Aspuru-Guzik, S. Benjamin, X. Yuan, Quantum computational chemistry, Rev. Mod. Phys. 92, 015003, 2020;

- X. Yuan, A quantum-computing advantage for chemistry, Science 369 (6507), 1054-1055, 2020; (Perspective)

- W. Li, Z. Huang, C. Cao, Y. Huang, Z. Shuai, X. Sun, J. Sun, X. Yuan, D. Lv, Toward Practical Quantum Embedding Simulation of Realistic Chemical Systems on Near-term Quantum Computers, Chemical Science 13 (31), 8953-8962, 2022;

- C. Cao, J. Sun, X. Yuan, H. Hu, H. Pham, D. Lv, Ab initio Quantum Simulation of Strongly Correlated Materials with Quantum Embedding, npj Computational Materials 9 (1), 78, 2023;

- QM. Ding, YM. Huang, X. Yuan, Molecular docking via quantum approximate optimization algorithm, Phys. Rev. Applied 21 (3), 034036, 2024;

Quantum + AI

Quantum Machine Learning (QML) and Variational Quantum Algorithms (VQAs) constitute the most promising pathway for utilizing Noisy Intermediate-Scale Quantum (NISQ) hardware. However, realizing their potential requires overcoming substantial hurdles regarding resource efficiency, model expressivity, and noise robustness. Our research addresses these challenges by establishing rigorous theoretical bounds and developing practical algorithms that bridge the gap between abstract models and physical implementation. We focus on three key areas: quantifying the capabilities of quantum models, designing algorithms for fundamental mathematical and physical problems, and accelerating optimization to reduce resource overhead.

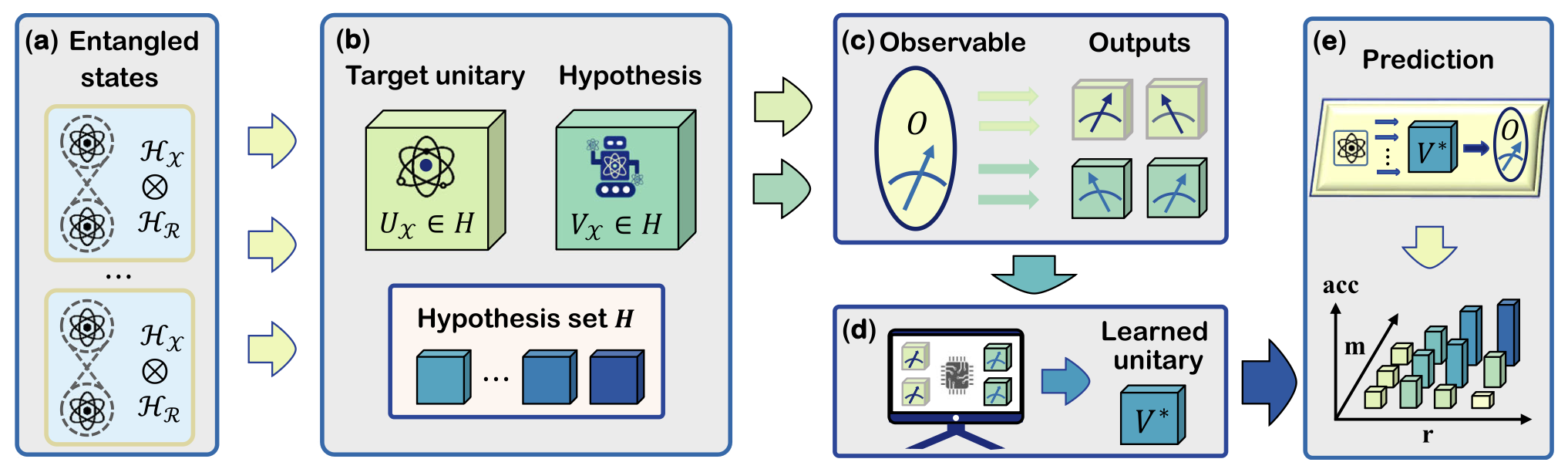

Theoretical Foundations: Expressivity, Complexity, and Data

To design powerful QML models, one must first understand their theoretical limits. In [1], we introduced the covering number—a tool from statistical learning theory—to quantitatively measure the expressivity of VQAs. We demonstrated that expressivity is exponentially controlled by the number of trainable gates and the qudit dimension, providing a design guide for potent ansatz construction. Regarding data resources, we established a quantum no-free-lunch theorem in [2], revealing the transitional role of entangled data. We showed that while entanglement reduces prediction error given sufficient measurements, it can be detrimental in measurement-limited regimes, offering crucial guidance for early-stage quantum protocols. Furthermore, in [3], we developed an algorithm to predict the complexity of weakly noisy quantum states, achieving near-optimal sample complexity by leveraging classical shadow representations and the intrinsic structure of quantum circuit architectures.

Algorithmic Innovations for Math and Physics

Moving beyond theory, we design variational protocols for specific computational tasks. In [4], we proposed variational algorithms for core linear algebra problems, such as solving linear systems. By mapping the solution to the ground state of a many-body Hamiltonian and utilizing adaptive Ansätze, this method enables solution verification on NISQ devices. In the domain of physics, we introduced the Quantum Kernel Alphatron (QKA) algorithm in [5] to solve Quantum Phase Recognition problems. This approach transforms predictions into quantum state overlap computations, demonstrating efficiency and robustness in regimes where classical hardness is provable under standard complexity assumptions.

Accelerating Large-Scale Optimization

A major bottleneck for VQAs is the prohibitive cost of parameter optimization. To address this, we proposed PALQO [6], a physics-informed protocol that reformulates training dynamics as a nonlinear partial differential equation. By using Physics-Informed Neural Networks (PINNs) to learn the optimization trajectory from a small amount of initial quantum data, PALQO predicts subsequent parameter updates classically. This method achieves up to a 30x speedup and a 90

- Y. Du, Z. Tu, X. Yuan, D. Tao, An efficient measure for the expressivity of variational quantum algorithms, Phys. Rev. Lett. 128 (8), 080506, 2022;

- X. Wang, Y. Du, Z. Tu, Y. Luo, X. Yuan, D. Tao, Transition role of entangled data in quantum machine learning, Nature Communications, 15, 3716 (2024), 2024;

- Yusen Wu, Bujiao Wu, Yanqi Song, Xiao Yuan, Jingbo Wang, Learning the Complexity of Weakly Noisy Quantum States, The 13th International Conference on Learning Representations (ICLR 2025), Singapore, Apr.24-28, 2025;

- X. Xu, J. Sun, S. Endo, Y. Li, S. C. Benjamin, X. Yuan, Variational algorithms for linear algebra, Science Bulletin 66 2181– 2188, 2021;

- Y. Wu, B. Wu, J. Wang, X. Yuan, Quantum Phase Recognition via Quantum Kernel Methods, Quantum 7, 981, 2023;

- Y Huang, Y Hao, J Zhou, X. Yuan, X Wang, Y Du, PALQO: Physics-informed Model for Accelerating Large-scale Quantum Optimization, NeurIPS 2025;

Quantum experiments

Experimental realizations of quantum algorithms

Achieving quantum advantage in the Noisy Intermediate-Scale Quantum (NISQ) era requires a tight integration of theoretical innovation and experimental hardware control. My research focuses on this intersection, where I serve as the lead theorist in experimental collaborations. We aim to push the physical limits of current devices by co-designing algorithms, measurement strategies, and error mitigation techniques that are tailored to the specific constraints of superconducting quantum processors. Our work spans three critical areas: optimizing foundational readout tools, scaling quantum entanglement, and benchmarking practical quantum chemistry.

Optimizing Readout and Fidelity

Efficiently extracting information and combating noise are prerequisites for high-performance experiments. In [1], we systematically analyzed Hamiltonian measurement strategies and proposed a highly efficient readout scheme. This approach reduced the measurement overhead by more than one to two orders of magnitude compared to traditional methods, playing a crucial role in enabling the readout of complex quantum states. Addressing hardware noise, we proposed a general quantum error mitigation framework in [2]. This method allows for the extraction of high-quality quantum entanglement and coherence from non-ideal resources, providing a safeguard for precision tasks in both quantum computing and communication.

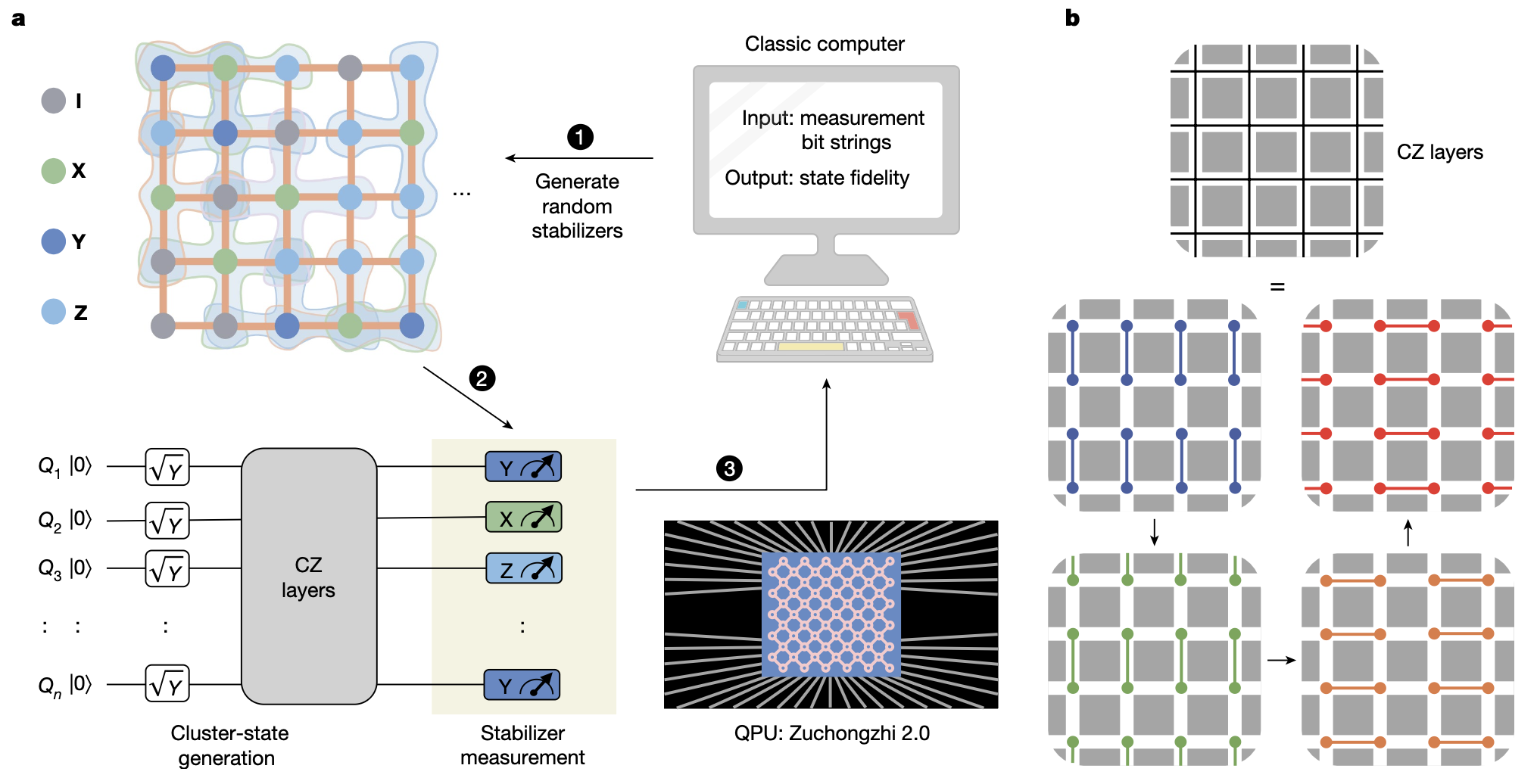

Scaling Entanglement for Measurement-Based Computing

Entanglement is the core resource of quantum computing, and the scale of preparable entangled states dictates the complexity of executable algorithms. In [3], we collaborated to explore the scalability limits of superconducting processors, achieving the generation of a 51-qubit cluster state—the largest of its kind to date and a twofold increase over previous records. We utilized randomized measurement techniques for entanglement verification and successfully demonstrated a measurement-based variational quantum eigensolver (VQE) to compute the ground state energy of a perturbed surface code. This work not only breakthroughs the entanglement preparation barrier but also establishes a solid experimental foundation for future measurement-based quantum computing.

Benchmarking Quantum Chemistry with Complex Ansatzes

Moving beyond classically simulatable methods (like Hartree-Fock), demonstrating quantum advantage in chemistry requires implementing complex wavefunctions, such as the Unitary Coupled Cluster (UCC) ansatz. Prior to our work, experimental implementations of UCC were limited to 3 qubits due to hardware constraints. In [4], we combined state-of-the-art theoretical optimization with experimental control to probe the limits of electronic structure calculations. By optimizing circuit design, measurement strategies, and error mitigation by over two orders of magnitude, we achieved the first experimental implementation of a 12-qubit UCC ansatz—the largest to date. We successfully computed ground state energies for H2, LiH, and F2, achieving chemical accuracy in specific instances and providing strong evidence for the potential of NISQ devices in computational chemistry.

- T. Zhang, J. Sun, X. Fang, X. Zhang, X. Yuan, H Lu, Experimental quantum state measurement with classical shadows, Phys. Rev. Lett. 127, 200501, 2021;

- X. Yuan, B. Regula, R. Takagi, M. Gu, Virtual quantum resource distillation, Phys. Rev. Lett. 132 (5), 050203, 2024;

- S. Guo, J. Sun, H. Qian, M. Gong, Y. Zhang, F. Chen, Y. Ye, Y. Wu, S. Cao, K. Liu, C. Zha, C. Ying, Q. Zhu, H. Huang, Y. Zhao, S. Li, J. Yu, D. Fan, D. Wu, H. Su, H. Deng, H. Rong, Y. Li, K. Zhang, T. Chung, F. Liang, J. Lin, Y. Xu, L. Sun, C. Guo, N. Li, Y. Huo, C. Peng, C. Lu, X. Yuan, X. Zhu, J. Pan, Scalable quantum computational chemistry with superconducting qubits, Nature 619, 738–742, 2023;

- Shaojun Guo, Jinzhao Sun, Haoran Qian, Ming Gong, Yukun Zhang, Fusheng Chen, Yangsen Ye, Yulin Wu, Sirui Cao, Kun Liu, Chen Zha, Chong Ying, Qingling Zhu, He-Liang Huang, Youwei Zhao, Shaowei Li, Jiale Yu, Daojin Fan, Dachao Wu, Hong Su, Hui Deng, Hao Rong, Yuan Li, Kaili Zhang, Tung-Hsun Chung, Futian Liang, Jin Lin, Yu Xu, Lihua Sun, Cheng Guo, Na Li, Yong-Heng Huo, Cheng-Zhi Peng, Chao-Yang Lu, X. Yuan, Xiaobo Zhu, Jian-Wei Pan, Scalable quantum computational chemistry with superconducting qubits, Nature Physics 20, 1240–1246 (2024);